At Cloud Field Day 3 we visited the Veritas office and they presented their cloud vision to us. It took a little while to ramp up, as I detailed in my post here. My fellow CFD delegate Martez Reed has already put an excellent post together detailing the high-level view of what Veritas had on display, so I won’t rehash that now. Instead, I would like to focus down on their CloudPoint offering and actually try and take it for a spin. Let’s see how far I get.

I went to the main Veritas site and I saw the Multi-Cloud offering on the front page. That’s a good start, so I clicked the link to “Discover” it. Now I feel like an explorer riding in my dirigible searching for the mythical cloud city of Tepulteca.

Alright, with my aviator goggles tightly fastened, I navigated the next WordPress page. Aha, I have struck gold! There is a section for CloudPoint. Two clicks and I am already on the product page. Sadly, I consider that a success in the world of modern web design.

And now a button advertising that I can “Get it free.” Pardon me for be slightly sceptical, but there’s still no such thing as a free lunch. Nevertheless, into the maw of the beast I must go. For science!

Ack! The dread form of incessant calling, emails, and other unwanted communication.

Oooh, and a T&C link. Pardon me while I read 64-pages of legal jargon…..

…reading….

Well that’s interesting: “You do not intend to use or access, nor will allow any other person to use or access, the software for any purpose prohibited by United States law, including without limitation, for the development, design, manufacture or production of nuclear, chemical or biological weapons of mass destruction.”

Not sure how I would use Cloud Backup Software to accomplish that, but important nonetheless I suppose. Can we also put a provision in there about not eating unicorns when using the software? It’s an objectionable practice and Veritas needs to take a stand!

…reading…

Then there’s this: “Licensee shall keep accurate business records relating to its use of the Licensed Software for a period of three (3) years following termination of this Agreement. Upon request from Veritas, Licensee shall provide Veritas with a report certifying the destruction of Licensed Software pursuant to Section 3. The provisions regarding license restrictions, confidentiality, audit, exclusion of warranty, and the general provisions in Section 8 will survive expiration of the evaluation Term or termination of this Agreement.”

I need to keep business records for three years relating to trial software? And I need to be able to provide a report detailing the software’s destruction? Wow, um maybe I don’t want to try it that badly. No, I must. For science!

…reading…

Ah, now there’s this gem: “Licensee shall not disclose the results of any benchmark tests run on the Licensed Software without Veritas’ prior written consent”

It was snuck into a section about Confidentiality. I can try this thing, but I can’t really tell people how well it works unless I clear it with Veritas first. And I’m totally sure they will be okay with numbers that aren’t flattering right. Right?

…reading…

Lastly, this thing is tied to their general license agreements page which includes two more four-page documents that continue in this vein. I will spare the reader any more “excitement.” Suffice to say that it is largely more of the same.

Okay, so here we go. I am filling out the form and getting the software.

Whew, that was easy. And gave me a download that is taking rather a long time. In the meantime, I have a couple comments. One, I immediately got this email, which is perfect:

Except I don’t see a link to CloudPoint documentation or a getting started video. That should definitely be in there. Secondly, the download screen is this.

That screen should have the same content as the email, and a link to a Getting Started document. Make this easy for me Veritas!

Well, I walked away to go get lunch, since the download was taking a bit of time. In total you are looking at a 1.6GB file. Don’t go downloading it on a hotspot at the airport. The file is Veritas_CloudPoint_2.0.1_IE.img.gz. And since I didn’t get directed to any kind of getting started docs, I have no idea what to do with this monster. Crack it open with 7-zip? Sure, why not.

Well, that’s not helpful now is it? Based on the contents of the json files, I gather that this is a container-based application. To the docs then! In case you are trying to find the docs, I’ll help. There were NO links to the docs on the main product site or on the main community page. But if you go into the Pinned Post “Welcome to the CloudPoint Community” and scroll down to Helpful Resources, then you will find the link. Or use this one, except don’t for reasons that becomes apparent a few paragraphs down. *[Foreshadowing]*

By the way, Amit was one of the presenters at CFD. He’s a good dude, highly recommend chatting with him if you get the chance.

I’ve got the docs and I’m reading through. The CloudPoint install is in fact a Docker image based on Ubuntu LTS 16.04. You will need a suitable host to run it. That host can be in the cloud or on-premises. They recommend a physical host, but I don’t see any reason you couldn’t use a virtualized host with sufficient resources. I’m going to choose to use Microsoft Azure for my host, since I have credits there.

Wait a second…

I could have saved myself a LOT of trouble and just used the Azure Marketplace image. The question is do I deploy my own Ubuntu host, or use the marketplace image. The answer of course is use the marketplace image, since I only have so much time here. I created the marketplace image in a Resource Group I am called CloudPointMarket. I ended using the D2S_v3 instance size and added a 64GB Premium disk for data. That’s the recommended sizing from the docs. The deployment was successful, so I am going to try and connect to the box. Based on the NSG rules created by the template, it looks like I can connect via SSH and HTTPS.

Or not.

SSH it is then.

So, erm. Now whut? Haha! Jokes on me. I’ve been looking at the CloudPoint 1.0 manual. And I should be looking at the CloudPoint 2.0 manual, which is here. Fortunately, the Microsoft Azure Marketplace item had a link to the CloudPoint support site. Which again, SHOULD BE IN THE INTRO EMAIL AND DOWNLOAD PAGE.

Since finding that document took me so long, I decided to check back on the website. Hey, turns out it was just taking a while for the container to initialize.

Filled out the form, and I’m all a-twitter with excitement.

Heh, sweet. Let’s back up some cloud.

I want to try a few things:

Let’s start with Azure VM. I click on Manage cloud and arrays and it brings me to a menu.

I’m guessing I need to click on the Microsoft Azure cloud to add one. Voila!

Click on Add configuration and we’re off to the races.

Of course, it doesn’t really guide me through the process beyond asking for this information. There should at least be a (?) button to help me out here. If I click through the documentation that launches from the main page.

I found in the unhelpfully titled Configuring off-host plug-in, and below that the Microsoft Azure plug-in configuration notes with this handy table.

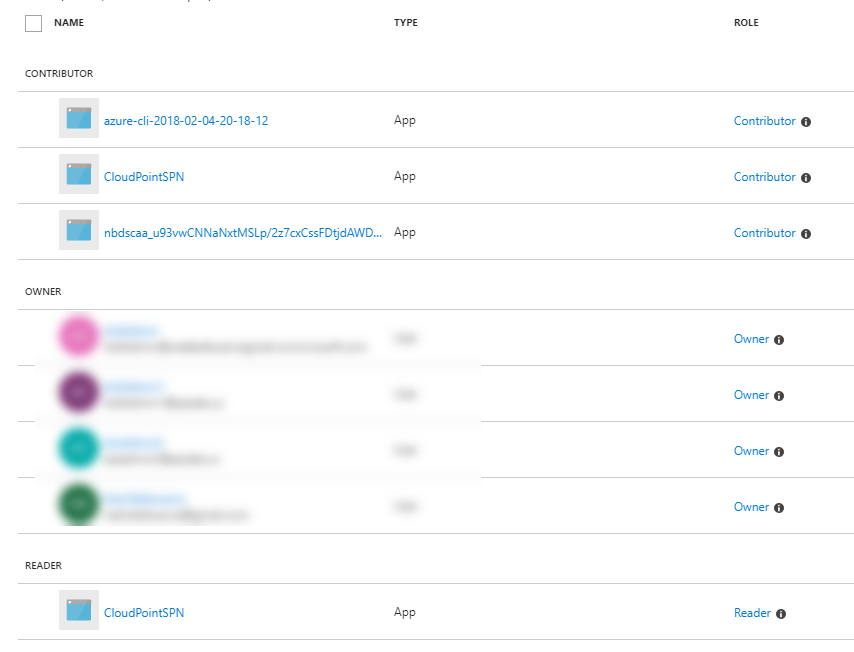

The link will take you to directions for creating a service principal using the portal, but I highly recommend using that Azure CLI in the Azure CloudShell instead. You can find directions here for that. I have a VM named BackupTest in a resource group called BackupTest, so I want to create a service principal with the necessary rights to backup VMs in BackupTest.

Here’s the CLI commands, be sure to swap the AppID out for the actual AppID created by the first command. And make note of the password and tenant ID as well. You’re going to need those.

az ad sp create-for-rbac --name CloudPointSPN

az role assignment delete --assignee "AppID" --role Contributor

az role assignment create --assignee "AppID" --role Reader

az role assignment create --assignee "AppID" --role Contributor --resource-group BackupTest

The documentation doesn’t actually specify what role is required on what resources, so I am going to try giving the SPN Contributor to the resource group and Reader to the subscription. We’ll see if that works okay.

There we go. Now that I’ve added a data source I get a different dashboard page.

Let’s protect some assets then. Here’s my VM.

And if I click on the pane, I get this sub-pane.

Clicking on Create Snapshot, I get this dialog box.

Looking in the Job Log, I can see that the snapshot appears to be successful.

That was a manual snapshot. If I want to automatically protect the VM, then I need to create a protection policy from the Dashboard view.

The policy tab is a little weird.

I can choose to retain a certain number of copies, days, weeks, months, or years. This is not at all clear. I am going to say 1 Month of retention with Daily backups at 12:00AM. I am guessing that means I will have a month of daily backups. The interface is awkward, but I’ve accepted that is just par for the course with backup software.

Now I can go back to the Polices section of the protected VM and assign it a policy for backup.

Wait, whut? Why?

Ah, see I created a Disk policy not a Host policy. I am trying to protect a Host, so I cannot assign a Disk policy. Maybe filter the policies to only objects that it can apply to?

I created an AzureGoldHost policy and all appears well.

The AWS side of things doesn’t use an IAM role or anything fancy like that. Instead it just wants the Access and Secret keys for your account. I created a user account with the AmazonEC2FullAccess and AmazonRDSFullAccess managed policies. In the future I would prefer that CloudPoint provide a predefined role you can copy and paste in.

I added the us-east-1 region to the configuration and created a new protection policy called AWSGoldHost. I created a new instance in my AWS account, and after 10 minutes it still hadn’t shown up as a protectable asset. There’s no refresh button, so I guess I have to wait it out. It would be nice to be able to automatically assign protection based on a metadata tag, and that goes for Azure, AWS, and any other target that supports tagging. Going in and manually assigning a protection policy doesn’t scale.

And after another few minutes the instance appeared, and I was able to add a protection policy and initiate a snapshot. Looks like all is well with the AWS side too.

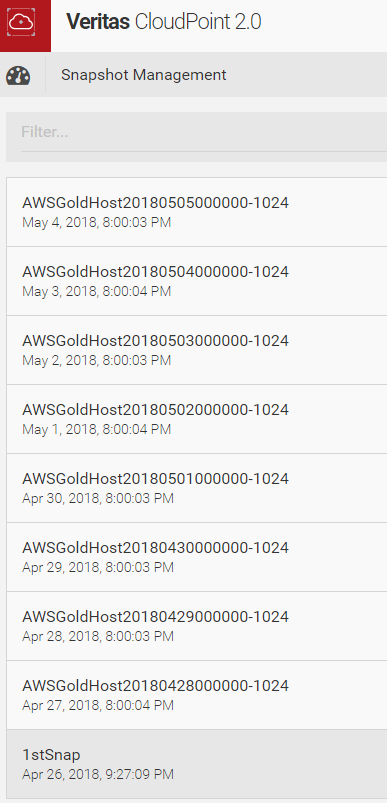

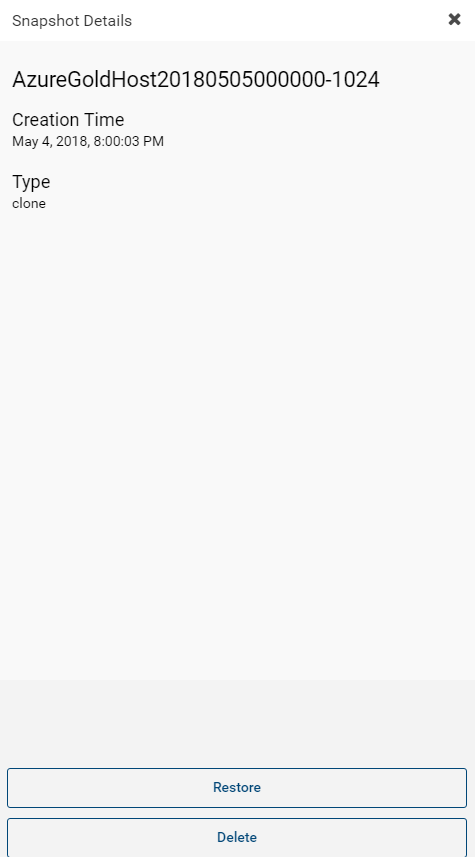

At this point I decided to let things run for a little bit and verify that the policies I had created were actually working. I came back a week later and I could see that the AWS instance was grabbing a snapshot every day, just like I asked it to.

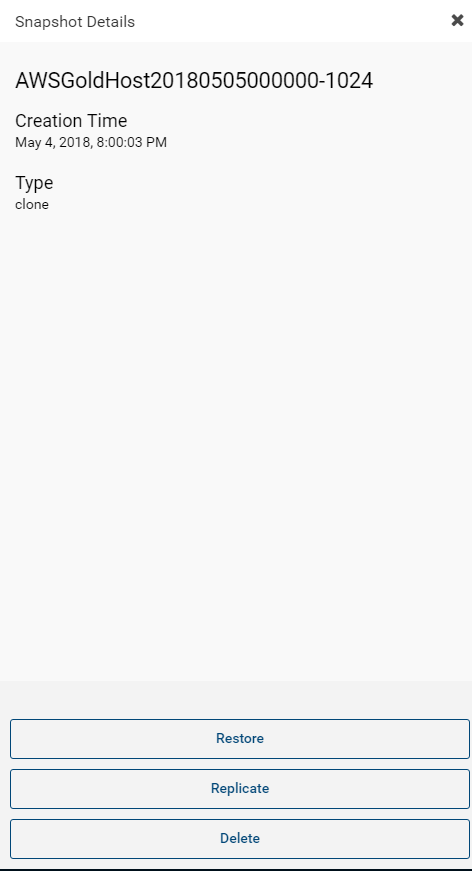

If I select a specific snapshot I get a side pane item that gives me a few action items.

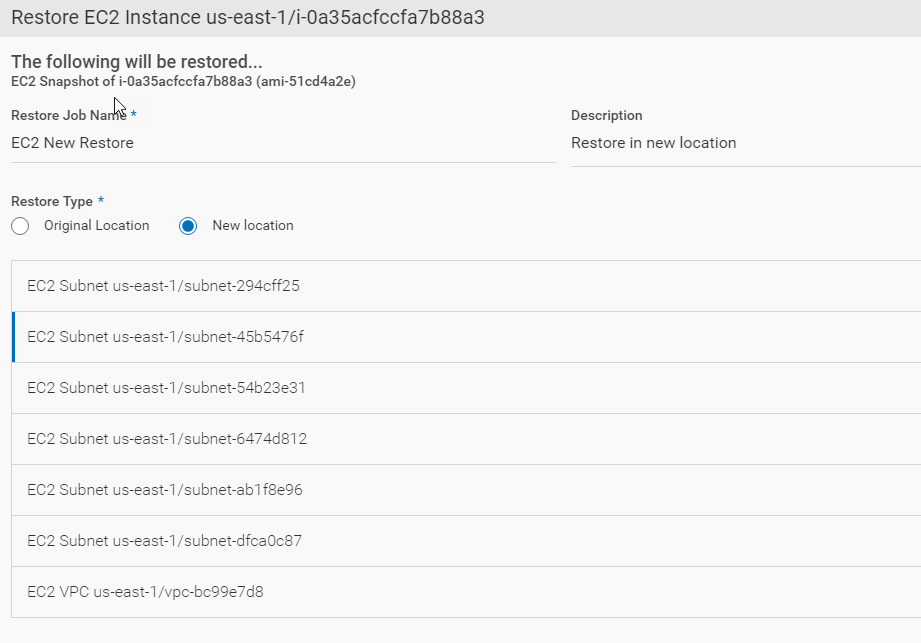

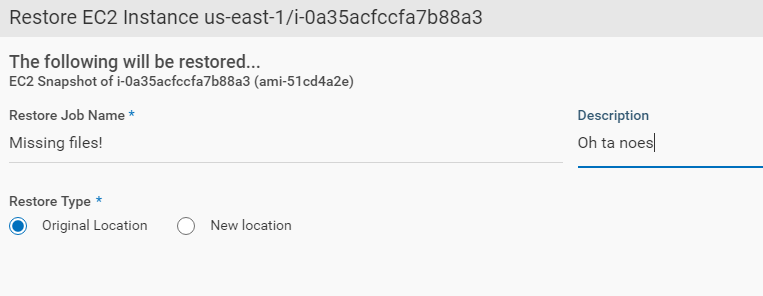

In this case I am going to try and restore the instance to a “New Location”, which in the world of AWS and CloudPoint means that I am restoring it to a different destination subnet.

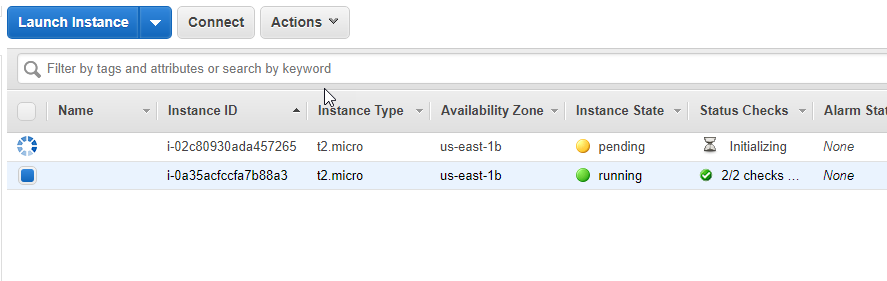

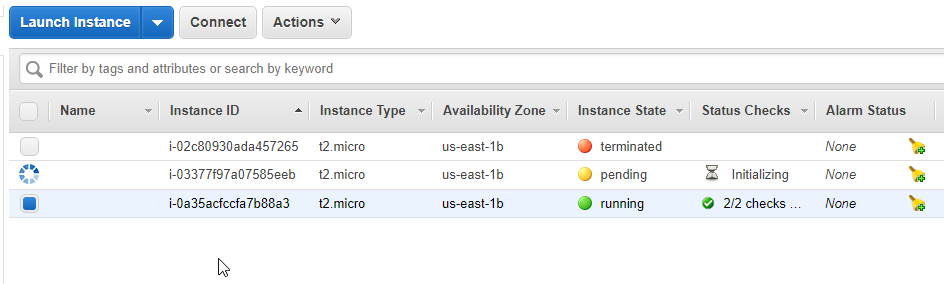

After kicking off the job, I can see in the AWS console that a new instance has been created and is in the process of initializing.

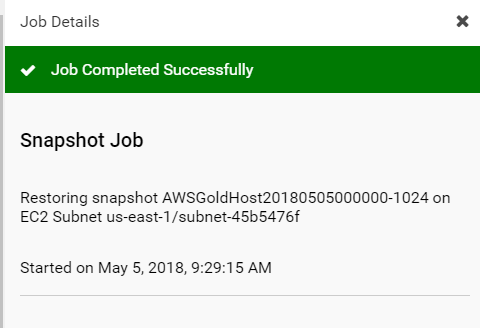

CloudPoint confirms that the job ran successfully.

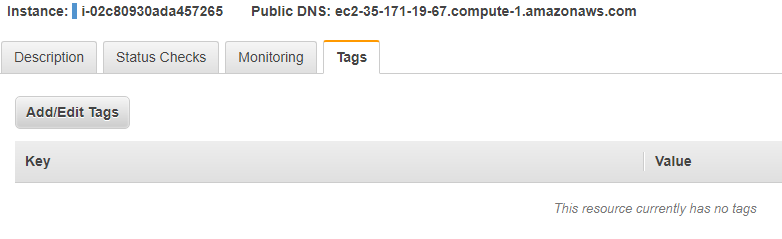

Going back to the AWS console, I checked to see if CloudPoint had added any type of metadata tagging to the restored instance. Sadly, it had not.

I would really like the option to add some default tags to instances that are recovered. I would also like CloudPoint to add some tags to protected instances, so it’s easy to check from the AWS side if something has been protected and what policy has been applied.

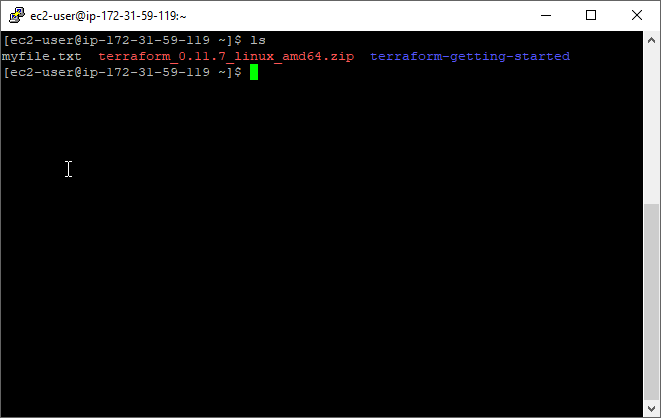

Logging into the instance, I check to make sure that some files I had dropped on the original instance were there.

And they were. So I would call this a success. I also tested the restore process sticking with the original location.

The restore will create the instance in the same subnet, but it will not overwrite the existing instance.

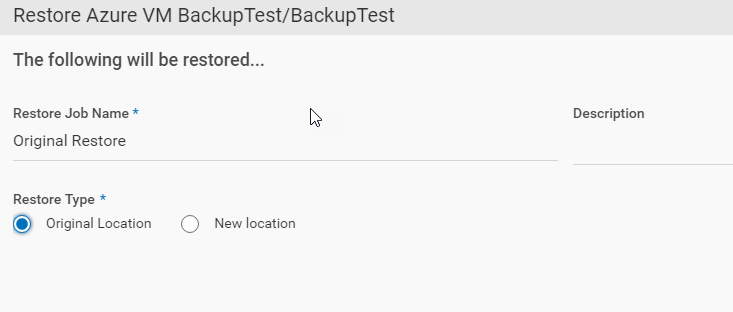

Next I attempted to restore an Azure VM. I select a snapshot and clicked restore, just like the previous attempt.

I chose to restore it to the original location, which in the world of Azure means the same Resource Group.

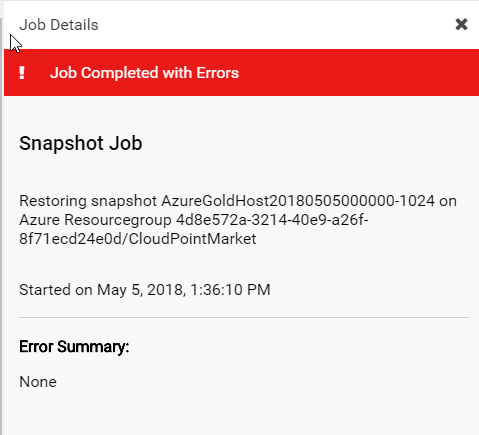

The job was, um, not successful.

Sadly, this is ALL the information the CloudPoint interface gives you. Examining the job log from the GUI gives no additional clue as to what happened. My first thing to check was maybe I was too restrictive on the permissions I gave to the CloudPointSPN account. I granted it Contributor access to the entire Azure subscription.

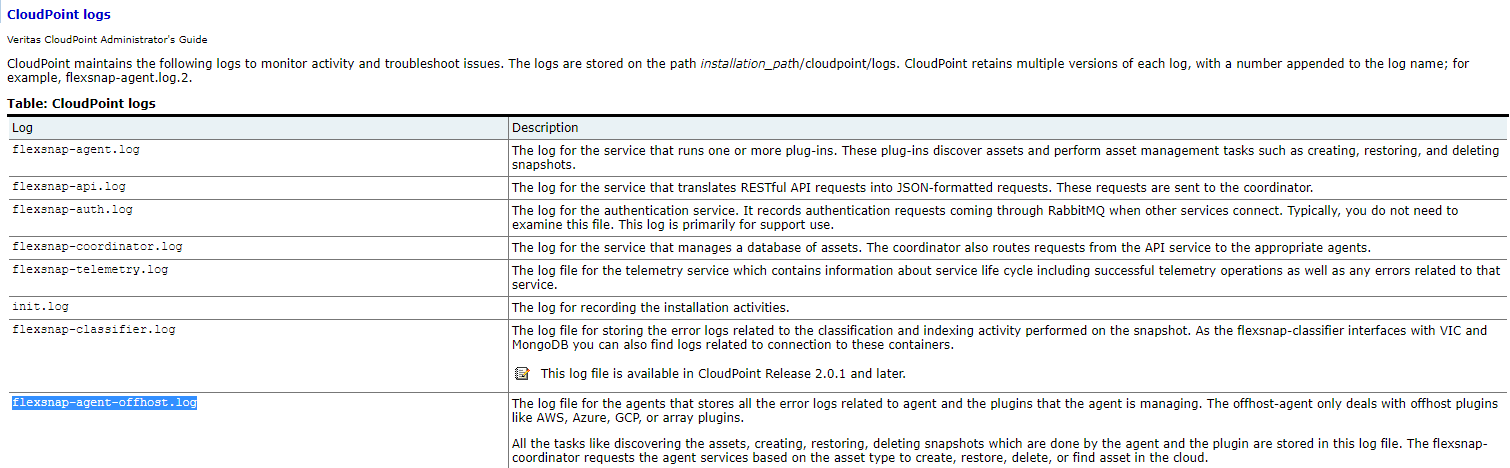

The job failed again. I dug into the documentation a little bit and found this page detailing the log files and their contents, as well as location.

Based on this document, I should be looking for the flexsnap-agent-offhost.log file. So I SSH’d into the CloudPoint box and tried to find that file. It did not exist. But I was able to find the log files in /cloudpoint/logs. The flexsnap-agent.log turned out to have the information I needed. The last error for restore was the following:

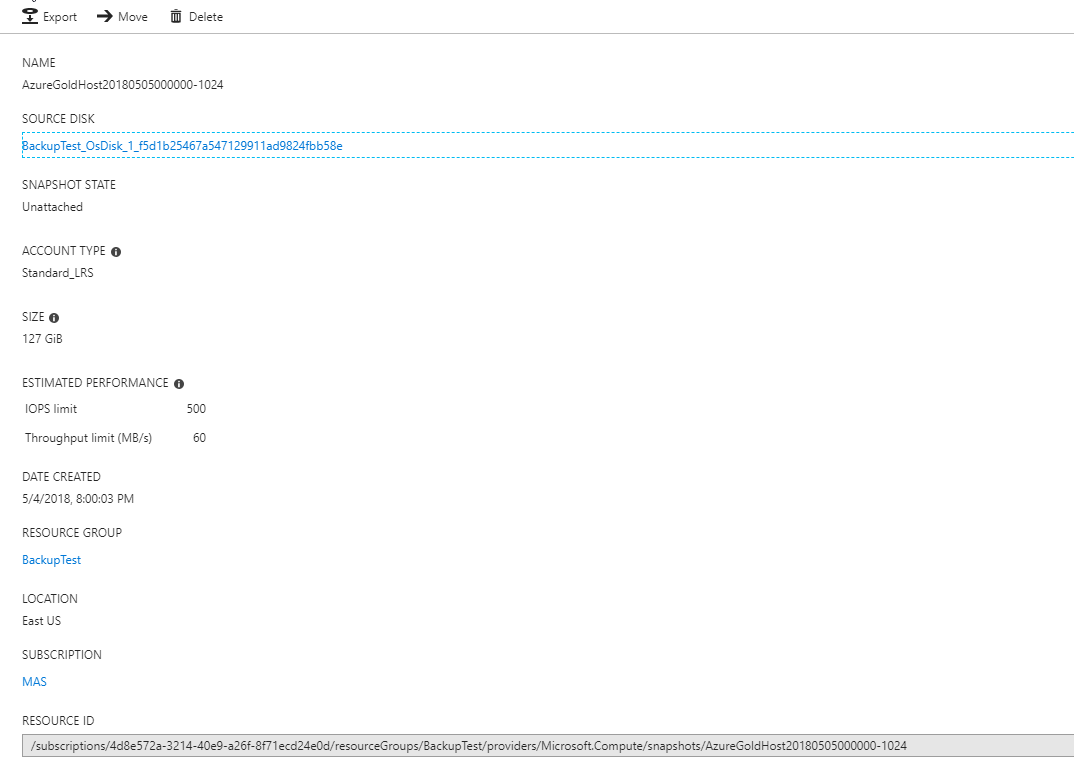

OperationFailed: Failed to restore snapshot: Source snapshot does not exist

I reviewed the accompanying json further up in the log, and I was able to verify the snapshot name and resource ID.

Azure: Restore instance snapshot azure-snapvm-4d8e572a321440e9a26f8f71ecd24e0d:backuptest:azuregoldhost20180505000000-1024

I check in the Azure portal and sure enough, the snapshot does exist.

It was at that point that I gave up. The product should be able to accomplish this simple task without me having to SSH into the box and go through log files to troubleshoot. I am having NetBackup flashbacks at this point, and I am not interested in going down that long, tortuous path to ruin.

As a side note, as I was letting these policies cook for a week, I got a call from someone at Veritas asking how things were going. That’s nice, I guess, but personally I don’t want anyone calling me. If I like the product and want to purchase it, I will reach out.

Based on my interaction with the CloudPoint product here are my thoughts.

Is this product ready to protect Production-level and scale workloads in the cloud? Not really. There’s a lot of good stuff here, but the product needs more time to bake in features and functionality. I think Veritas has a good solution here, and it’s going in the right direction. My impression is that the team behind CloudPoint is fairly small. If Veritas was waiting to get to MVP before heavily investing, then I would say that threshold has been crossed. Time to open the floodgates and get this product to a maturity level that I would feel comfortable recommending to clients.

May 23, 2026

May 4, 2026