I just finished updating my Azure Stack ASDK to the latest 1901 version. Before the upgrade I was messing around with the Kubernetes cluster offering, and I wanted to get that added back to my ASDK now that I’ve performed the update. I rushed through the process, and of course got an error. And that error was not very helpful. Just in case you’re like me, and missed a step in the setup for K8s on Azure Stack, here are the various error messages and the solution.

The TL;DR? Add the Service Principal you created for K8s as a Contributor on the subscription the cluster will be running in.

You’re welcome.

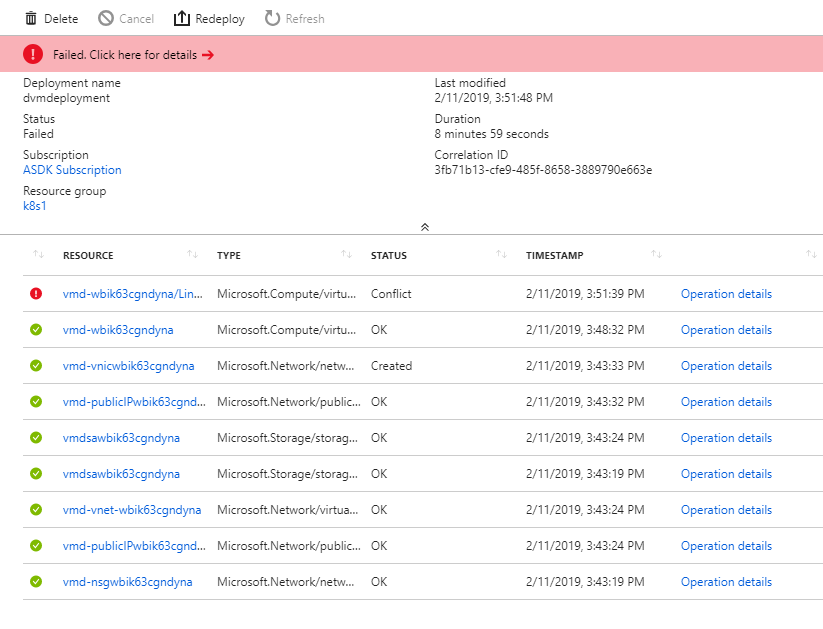

After attempting the deployment I got this.

Which is not terribly helpful. What does Conflict even mean? If you drill down to the Operation details, you get the following status message:

{

"status": "Failed",

"error": {

"code": "ResourceDeploymentFailure",

"message": "The resource operation completed with terminal provisioning state 'Failed'.",

"details": [

{

"code": "VMExtensionProvisioningError",

"message": "VM has reported a failure when processing extension 'LinuxCustomScriptExtension'. Error message: Enable failed: failed to execute command: command terminated with exit status=1\n[stdout]\n\n[stderr]\n"

}

]

}

}

Which basically means that the Custom Script Extension for Linux exited with an Error Code of 1. Again, this does not tell me anything that is helpful. Since the deployment leaves the virtual machine up and running, I connected via SSH and started to poke around. According to the troubleshooting docs on Microsoft’s site, I can check the log file located in /var/log/waagent.log for more info. I did that and got this helpful error message:

2019/02/11 16:25:26.423933 WARNING ExtHandler Unknown property: extensionHandlers.properties.upgradePolicy

Repeated about a hundred times. That didn’t really point me in the right direction either. Nevertheless, I am persistent. After a bit more poking around I found another log I needed to look at /var/log/azure/custom-script/handler.log. That is the log which contains the error:

0 event="failed to handle" error="failed to execute command: command terminated with exit status=1"

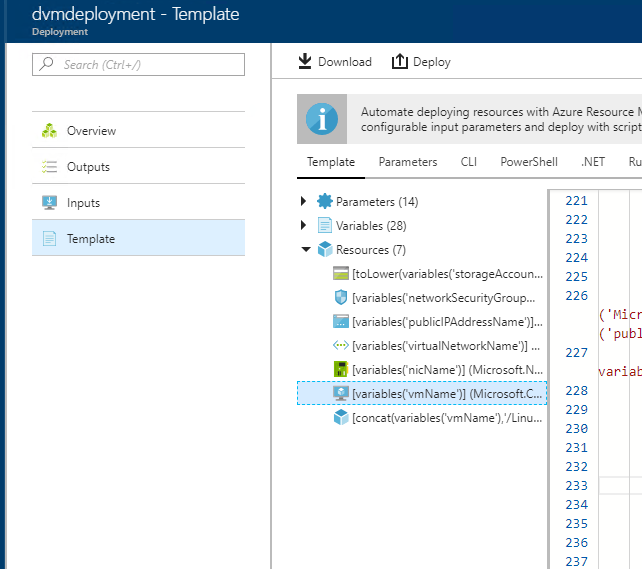

That’s the error I was seeing in the Azure Stack portal. Clearly something was amiss with the commands being run by the Custom Script extension. Now I needed to dig a little into the commands being handed to the Custom Script Extension. I looked at the actual template being submitted for this deployment. In that template, under the Resources category I selected the Virtual Machine.

In the JSON there is a nested virtual machine extension resource with the following command to execute:

"[concat(variables('scriptParameters'), ' PUBLICIP_FQDN=', '\"', reference(resourceId('Microsoft.Network/publicIPAddresses',variables('publicIPAddressName')),'2015-06-15').dnsSettings.fqdn,'\"',' /bin/bash /opt/azure/containers/script.sh >> /var/log/azure/acsengine-kubernetes-dvm.log 2>&1')]"

I admit that isn’t easy to look at. The key portion of the script is that the logging is going to /var/log/azure/acsengine-kubernetes-dvm.log. Looking through that log I finally found the following error message:

failed to load apimodel: failed to get client: resources.ProvidersClient#List: Failure responding to request: StatusCode=403 -- Original Error: autorest/azure: Service returned an error. Status=403 Code="AuthorizationFailed" Message="The client 'b6d59fc6-e848-4612-aec0-817918cf6b8a' with object id 'b6d59fc6-e848-4612-aec0-817918cf6b8a' does not have authorization to perform action 'Microsoft.Resources/subscriptions/providers/read' over scope '/subscriptions/39c86ab5-8ac8-422b-b570-5fc6092c8702'."

Again, not the prettiest thing to look at, but the main thing to get out of this is that the client does not have authorization to perform an action on the subscription. That triggered in my mind the fact that when you fill out the deployment fields for the Kubernetes template you need to supply a Service Principal that has Contribute permissions on the subscription. I had reused the Service Principal I created last time, but I had not granted it permissions on the new subscription that was created after updating the ASDK.

I guess that means that next time I should RTFM, even if I have already read it once before. That being said, the troubleshooting process could have been easier if the logging messages had been surfaced up through the Azure portal. When a script fails, it should pass a helpful message through stderr and the invoking script should grab that error and surface it up through the layers. That way, instead of getting the incredibly unhelpful “command terminated with exit status=1”, I would instead have received the actual error message and would not have to dig through configs and logs to find it.

May 23, 2026

May 4, 2026